Syskit's event loop

- Events, Reactor, Synchronous and Asynchronous

- Scheduling

- Component Lifecycle, Configuration, Startup and Shutdown

- Garbage Collection

- Component Reconfiguration

This page will be the first one that will go deeper in Syskit's execution model. This one will present Syskit's event loop structure, detailing how things like configuring, starting or stopping components is sequenced during Syskit's execution.

Events, Reactor, Synchronous and Asynchronous

Fundamentally, Syskit's execution is event-based and based on the reactor pattern. External events are received and Syskit does "other things" in reaction to their happening. Most common reaction is to call a piece of code registered as a handler for the event. Schematically:

Unlike common event-based programming environments, Syskit does not only react to events, but can also cause them. Some events, such as the start and stop events of components are controllable, which means that Syskit can make them happen instead of only waiting for them to happen, by calling a piece of code called the event command.

Within Syskit, events are propagated. This means that Syskit tries to maintain a synchronous model in which within the same propagation, events can cause the emission of other events (within for instance an event handler). The new events are then propagated which …. For instance, compositions do not have to do anything to "be started". Therefore, the start event is emitted at the moment it is called. This propagation is synchronous, the whole propagation is done within a single event cycle.

This event propagation is done within a single thread. Which means that computation within event commands and handlers will block the propagation. To avoid issues with e.g. blocking network calls, Syskit has infrastructure to execute remote calls asynchronously. The event command executes in a separate (blocking) thread and the event emission is delayed until that command finishes, possibly in a new event cycle.

Scheduling

The scheduler is an object responsible to determine which start commands can be executed at the beginning of a given cycle, and to execute them. Syskit's default scheduler is the temporal scheduler. We will see some characteristic of this scheduler in this section.

First and foremost, a component can be scheduled only if:

-

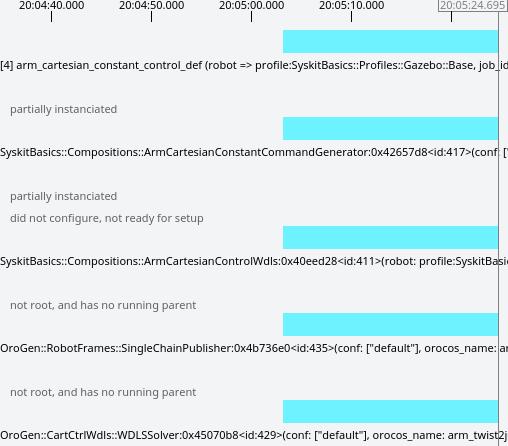

it has all its parameters set. This is a common problem, that some arguments are unset and the network does not run. This can be caught in tests. At runtime, in the IDE, this is leads to the

partially instanciatedmessage:

-

its execution agent is ready. Agents are expected to have a ready event that is emitted when the agent reached a state where the components it supports can be executed.

In addition, the basic scheduling rules are:

- a component may be started only if at least one of its parents in the dependency relation is running (the start event has been emitted).

- a component may be started only if all its inputs are connected

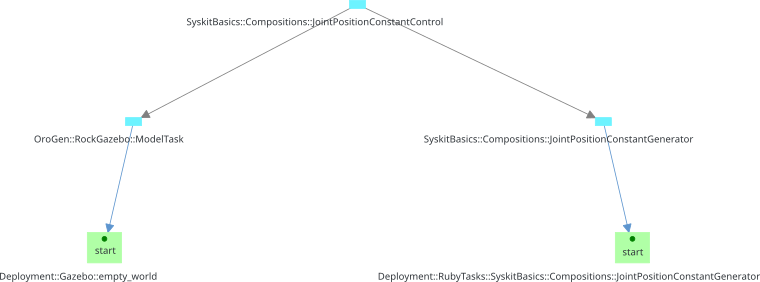

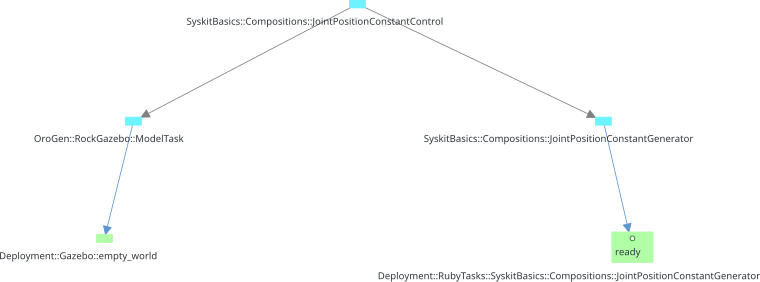

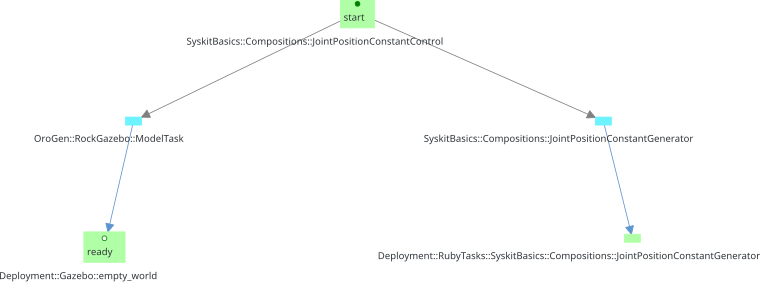

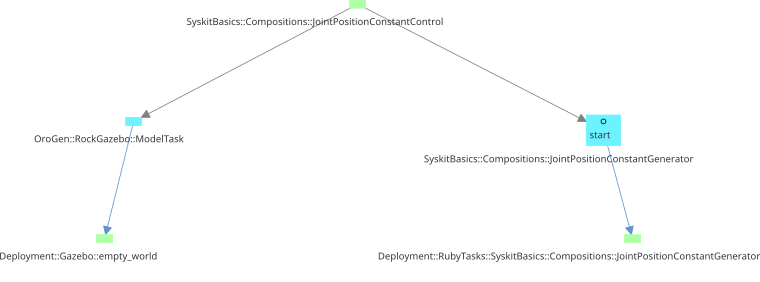

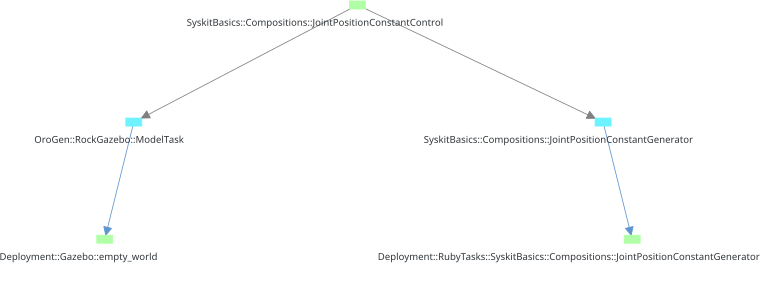

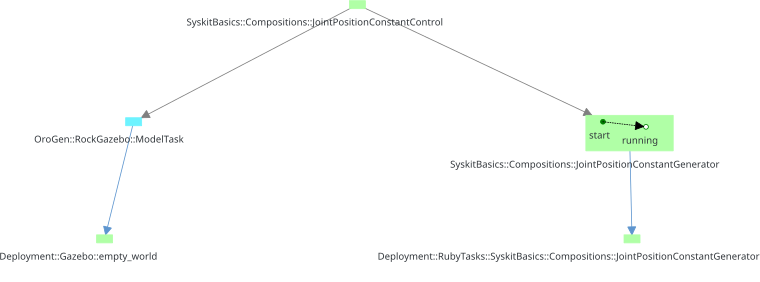

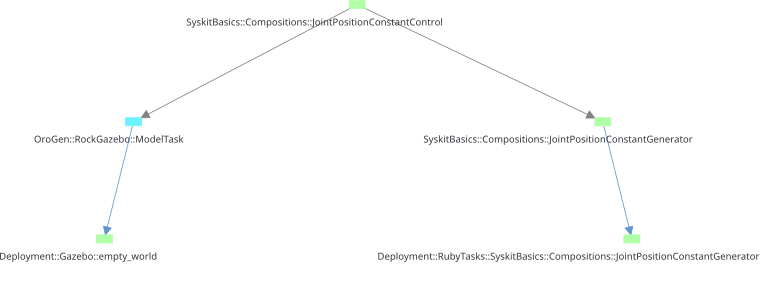

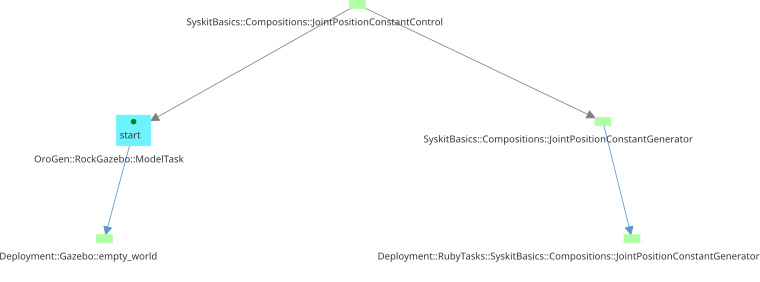

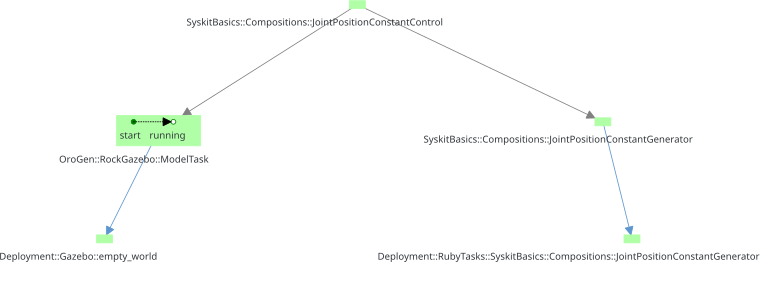

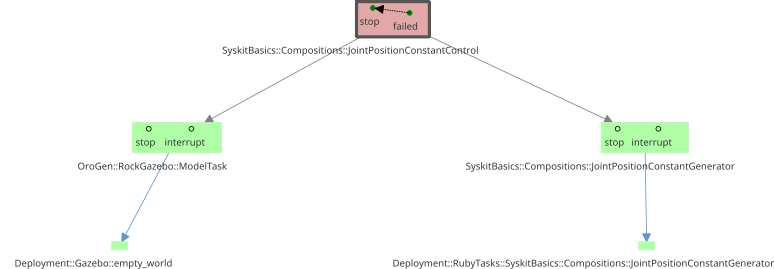

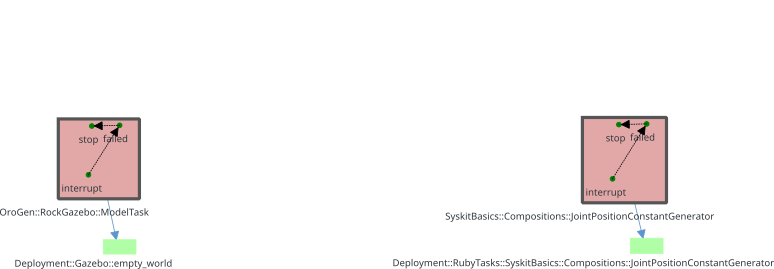

To illustrate this, let's have again a look at the startup "from scratch" of the safe pose job. Each image represents one execution cycle. An empty circle is an event command and full circle an event emission. See how the compositions are both called (scheduled) and emitted in the same cycle while the components start event are emitted in a different cycle than the one they have been called in. Finally, the cycle where seemingly nothing happens is due to the component configuration, which is not represented by an event.

Under these rules, the semantic of "a component is running" is to represent that the system has the intent to achieve its role, but not that it actually does execute that role (yet): the component's children may not run yet. For instance, a running safe position composition represents that the system has the intent to reach that position, but it will not be reaching that position until the composition's constant generator and arm model are running. This will be an important distinction when building more complex, coordinated systems.

Note that this is a simplification that is "good enough" for now. These are default rules, that can be overridden explicitly on a case-by-case basis. There will be more about this in the coordination part.

Component Lifecycle, Configuration, Startup and Shutdown

Rock components have a lifecycle of their own. This lifecyle is what makes a generic coordination of them possible: it is possible to expect a standardized behavior. The nominal lifecycle - that is, without error states - is the following state machine:

This is the lifecycle of a single component. Sequencing of the startup and shutdown of a network is more complicated. Syskit relies on some assumptions to make it possible:

- Component's

configuretransition is expected to be standalone - i.e. not rely on other components. The exception to this rule are devices that rely on data bus components (e.g. a CAN device on a CAN bus). We will see those in the advanced coordination section. - Components have the ability to create new ports dynamically, which is

usually controlled by their configuration. Syskit may therefore not expect

all ports to be available before the component is configured. However,

Syskit restricts dynamic port creation to

configure, and expects them to be destroyed duringcleanup.

With these assumptions, Syskit can generically sequence the startup and shutdown of a component network:

- create new connections between non-dynamic ports that involve at least one component that is not configured. Connections are parallelized. Rationale: the "components not configured" constraint ensures that we do not disrupt existing components before the rest of the network is ready to run.

- configure components in parallel. Rationale: the component configurations are standalone.

- apply the remaining connection modifications (addition and removal, in parallel)

- start components (in parallel)

Components are stopped through Syskit's garbage collection mechanism. Connections are garbage collected when the corresponding component(s) are.

All actions that involve a direct interaction with the component (sending the start command, writing properties, …) are done asynchronously in separate threads, and will be done in parallel if more than one component are being setup. All actions that involve code written within the Syskit app itself are executed sequentially within the main thread.

Garbage Collection

The garbage collection recursively stops tasks that are not useful for an active job, as in the following video. Note again that the compositions' stop events are emitted in the same cycle as they are commanded, while components are stopped asynchronously:

Component Reconfiguration

As already mentioned, external processes are marked as permanent by default within Syskit. One of the main reasons is that configuration of an external component may be fairly expensive (load maps, precompute expensive data structures, configure a device, …), and Syskit therefore attempts to reduce how often they are required.

When configuring components, Syskit will attempt to cleanup and configure a

given component only if the component's configuration changed since the last

configuration. Otherwise, it will only have to start it. cleanup is also done

asynchronously to avoid blocking the main thread execution.

In the following video, we change the configuration file for our cartesian controller, reload the configuration and trigger a reconfiguration.

Note the "reload" step only loads the modified files from the disk into the Syskit process. The "reconfigure" step will change configuration on already running tasks.

Another source of reconfiguration is to transition between two networks that select different component configurations. When this happens, Syskit will stop and reconfigure the affected components while deploying the new network.